Abstract

Testing of 2 Application Ranking Approaches at the National Institutes of Health Center for Scientific Review

Richard K. Nakamura,1 Amy L. Rubinstein,1 Adrian P. Vancea,1 Mary Ann Guadagno1

Objective

The National Institutes of Health (NIH) is a US agency that distributes approximately $20 billion each year for research awards based on a rigorous peer review that provides a merit score for each application. Final scores are based on the mean of scores from reviewers and then ranked via percentile. In 2009, the NIH changed its scoring system from a 40-point scale to a 9-point scale. There have been concerns that this new scale, which is functionally cut in half for the 50% of applications that are considered competitive, is not sufficient to express a study section’s judgment of relative merit. The question guiding these pilot studies was whether alternative methods of prioritizing applications could reduce the number of tied scores or increase ranking dispersal.

Design

The Center for Scientific Review has been testing alternate scoring systems, including (A) postmeeting ranking of the top scoring applications, in which reviewers rank-order the 10 best applications at the end of a review, and (B) giving reviewers the option of adding or subtracting a half point during final scoring of applications following discussion. These alternatives were compared against standard scoring in real study sections to see if they could improve prioritization of applications and reduce the number of tied scores. Reviewer opinions of the ranking systems were assessed, including surveys for alternate B.

Results

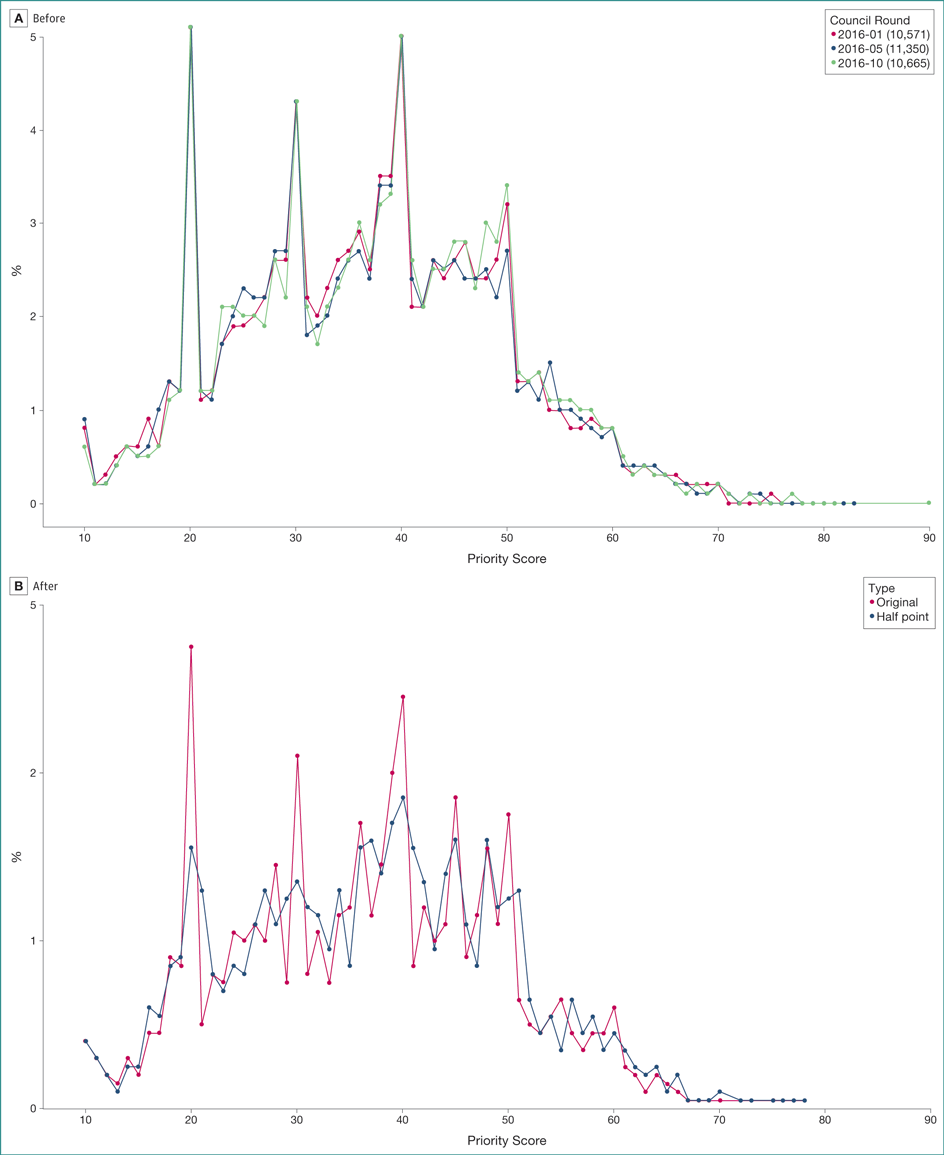

(A) Postmeeting ranking of applications were applied to 836 applications across 32 study sections; these often produced rankings inconsistent with scores given to applications by reviewers. The best 2 or 3 scored applications were generally agreed on, but increased disagreement among reviewers was observed with poorer average scores. Most reviewers liked the ranking system, but there was more hesitation about recommending adoption of this practice over the current scoring system. (B) Making available a half point to add or subtract freely in final voting was applied to 1371 applications across 39 study sections; the half-point system helped to spread scores and halved the number of ties (Figure). It was also recommended for adoption by 72% of reviewers in postmeeting surveys.

Figure. Distribution of National Institutes of Health Grant Application Scores by Percent Before and After Use of the Half-Point Option

A, Distribution of final scores for grant applications as a percent of all scores (of 32,586 applications). Each application received scores from many reviewers that were multiplied by 10 and averaged to the nearest unit. Possible final scores for each application ranged from 10 to 90. Dates refer to the cycle of review and the number is the quantity of applications with scores. In January 2016, 10,571 applications received scores; in May 2016, 11,350 applications received scores; and in October 2016, 10,665 applications received scores. B, Comparison of distribution of original average scores (red) with scores for which reviewers were allowed to add or subtract a half point (black). Average scores are rounded to the nearest digit to establish ranking. Original refers to the proportion of scores at each possible score level under normal whole digit scoring. Half point refers to the proportion of scores at each possible score level when reviewers used whole digits plus or minus 1 half point.

Conclusions

Initial results of the half-point scoring system have been interpreted favorably. The Center for Scientific Review will conduct a full test of the half-point scoring system under real review conditions.

1Center for Scientific Review, National Institutes of Health, Bethesda, MD, USA, rnakamur@mail.nih.gov

Conflict of Interest Disclosures:

All authors are employees of the National Institutes of Health.

Funding/Support:

Study funded by the National Institutes of Health.

Role of the Funder/Sponsor:

The funder had no role in the design and conduct of the study; collection, management, analysis, and interpretation of the data; preparation, review, or approval of the abstract.