Similarity Scores of Medical Research Manuscripts Before and After English-Language Editing

Abstract

Joon Seo Lim,1 Danielle A. Lee,1 Sung-Han Kim,2 Tae Won Kim3

Objective

Plagiarism detectors are used by scientific journals to check submitted manuscripts for potential plagiarism. However, there are concerns that plagiarism detectors are overly sensitive and flag commonly used phrases as potential plagiarism.1 This concern suggests that editing grammatically awkward phrases by using common expressions may increase similarity scores even though the flagged phrases were generated without intentions of plagiarism by the author or the editor. Considering that the use of language editing services is growing, this study compared the iThenticate similarity scores in medical research manuscripts before and after English-language editing.

Design

This cross-sectional study was performed in January 2020 and is reported according to the STROBE guidelines. Fifty first-draft manuscripts written by the researchers at a tertiary referral center in Seoul, South Korea were randomly selected. All researchers were non–native-English-speaking Korean nationals. The similarity scores of the 50 manuscripts were assessed before and after English-language editing, which was provided by external vendors (40 [80%]) or in-house editors (10 [20%]). The default setting in iThenticate was used first according to the standard practice in scientific journals. Then, considering that the mean number of words per sentence in medical research papers is approximately 20 words,2 the “exclude matches that are less than: 20 words” filter was also applied to exclusively assess sentence-level similarities. To assess the degree of text similarity according to each manuscript section, the similarity scores were manually measured by calculating the proportion of highlighted text in each section using ImageJ.

Results

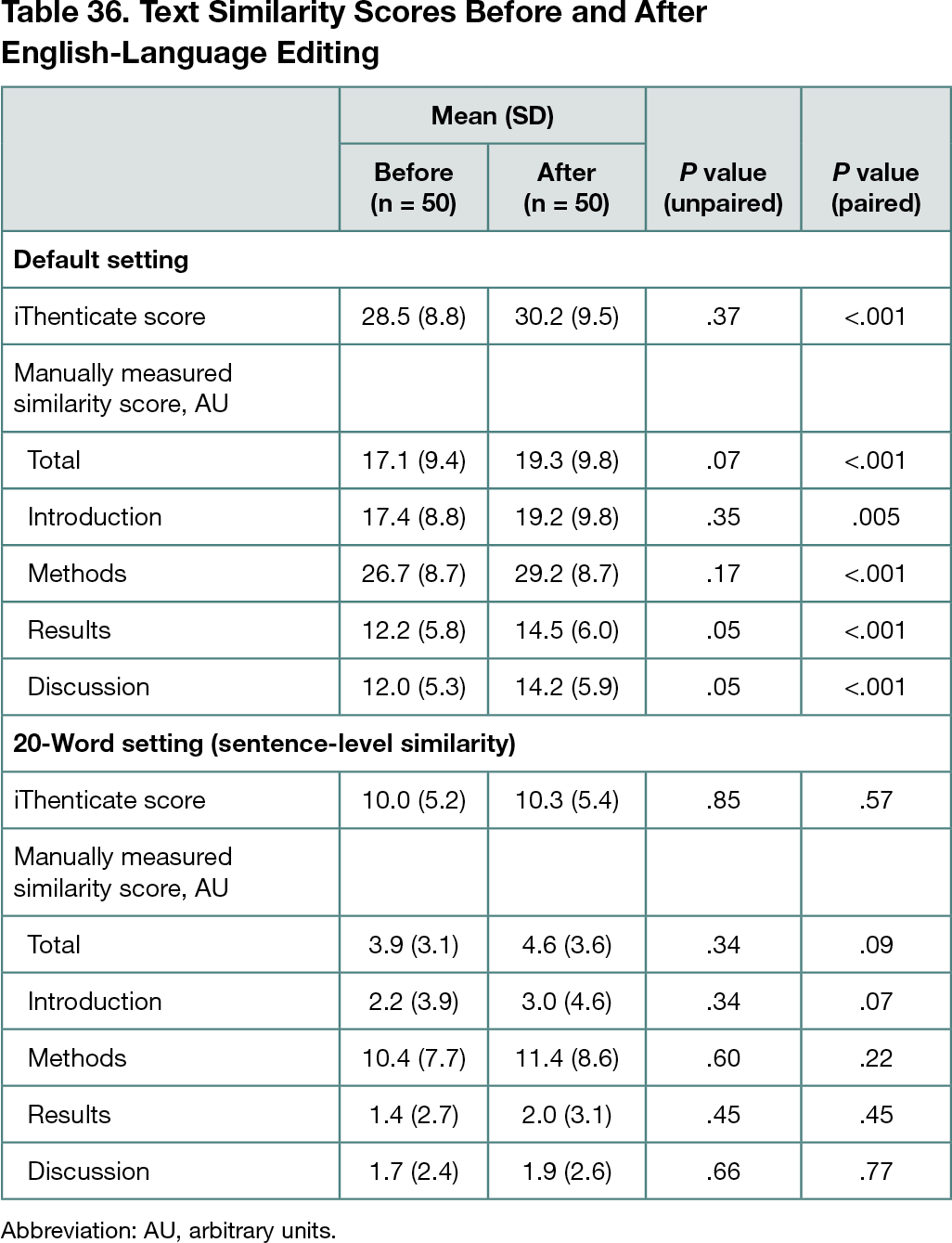

When using the default settings, the mean similarity scores of the 50 manuscripts increased from 28.5% to 30.2% after English-language editing, which was not statistically significant in an unpaired t test (P = .37) but significant in a paired t test (P < .001) (Table 36). Manually measured similarity scores of the manuscripts according to each manuscript section (Introduction, Methods, Results, and Discussion) also showed that the similarity scores of each manuscript significantly increased in a paired t test (P < .001) but not in an unpaired t test (P = .07); of the 4 sections, the Methods section had the highest mean similarity score both before and after English-language editing. When the “exclude matches that are less than: 20 words” filter was used to assess sentence-level similarity, the mean similarity score increased from 10.0% to 10.3%, which was not statistically significant in both unpaired (P = .85) and paired (P = .57) t tests.

Conclusions

The similarity score of each manuscript showed a modest increase (mean change, 1.7%; P < .001 in paired t test) when using the default setting of iThenticate. The default setting of iThenticate may lack the specificity to exclude inadvertent textual similarities, such as those resulting from the correction of grammatically incorrect phrases and the employment of common phrases in the course of English-language editing.

References

1. Weber-Wulff D. Plagiarism detectors are a crutch, and a problem. Nature. 2019;567(7749):435. doi:10.1038/d41586-019-00893-5

2. Barbic S, Durisko Z. Readability assessment of psychiatry journals. Eur Sci Ed. 2015;41:3-10.

1Scientific Publications Team, Clinical Research Center, Asan Institute for Life Sciences, Asan Medical Center, University of Ulsan College of Medicine, Seoul, South Korea; 2Department of Infectious Diseases, Asan Medical Center, University of Ulsan College of Medicine, Seoul, South Korea; 3Department of Oncology, Asan Medical Center, University of Ulsan College of Medicine, Seoul, South Korea, twkimmd@amc.seoul.kr

Conflict of Interest Disclosures

None reported.

Funding/Support

This work was supported by a grant (#2019-781) from the Asan Institute for Life Sciences at Asan Medical Center (Seoul, South Korea).

Role of the Funder/Sponsor

The funder had no role in the design and conduct of the study; collection, management, analysis, and interpretation of the data; preparation, review, or approval of the abstract; and decision to submit the abstract for presentation.