Mitigating Subjectivity in Peer Review via Artificial Intelligence

Abstract

Henry Gouk,1 Nihar Shah2

Objective

Peer review incurs a problem of subjectivity (also called commensuration bias)1,2: different reviewers put differing emphasis on the various criteria when combining them to make an overall recommendation. Such subjectivity and resulting inconsistencies in the reviews are said to contribute to arbitrariness of the peer review process.1,2 In a top-tier venue’s peer review process, 2 types of inconsistencies due to such subjectivity were quantified, and an artificial intelligence (AI)–based strategy3 was deployed to mitigate such subjectivity.

Design

This study was conducted in the final decision phase of the Association for the Advancement of Artificial Intelligence Conference on Artificial Intelligence 2022, which is a top scientific conference in artificial intelligence that reviews full papers, is a terminal publication venue, and is considered at par with journals. Reviewers were asked to evaluate papers on 8 criteria and also provide an overall recommendation. The issue of subjectivity pertains to different reviewers using different mappings from the criteria to their overall recommendations. Subjectivity may also lead to the following inconsistency. For any review, r, let cr denote the vector of criteria scores and or denote the numeric overall recommendation. For any criteria score vectors c and c′, c > c′ if every entry of c is at least as large as the corresponding entry of c′ and at least 1 entry of c is strictly larger than the corresponding entry of c′. A pair of reviews, r and s, are said to be inconsistent if cr > cs and or ≤ os or if cr = cs and or ≠ os. They are strongly inconsistent if cr > cs and or < os. An AI technique3 was deployed to mitigate such subjectivity. From the reviews, the AI learned a single mapping from criteria scores to overall recommendations that was representative of the entire set of reviews. Then, it applied the learned mapping to the criteria scores in each review to obtain an updated overall recommendation associated with each review. It mitigated subjectivity by virtue of the fact that the updated overall recommendations for all reviews were obtained via the same mapping. The design of the AI also ensured that the updated overall scores did not have the aforementioned inconsistencies.

Results

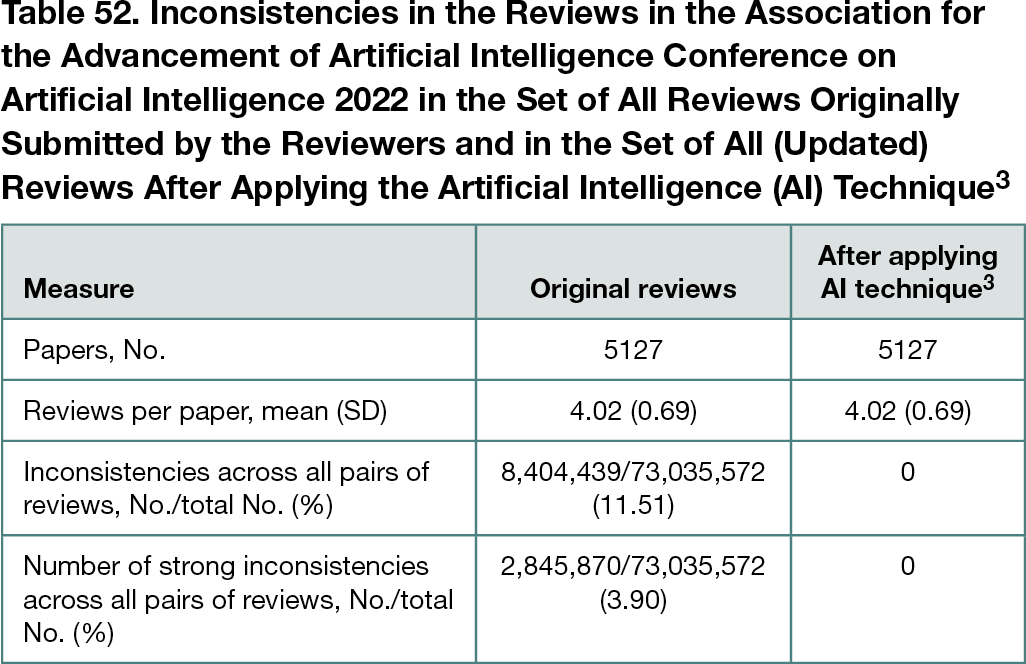

There were 177 reviews in which the difference between the original overall recommendation provided by the reviewer and the updated overall recommendation computed by the AI was 2 or greater (on a 10-point scale); the (equivalents of the) associate editors for these reviews were notified. The maximum such difference was 7. The number of inconsistencies in the reviews is shown in Table 52.

Conclusions

A large number of inconsistencies were found across reviewers in how criteria are mapped to overall recommendations. An AI method3 was successfully deployed to mitigate subjectivity in peer reviews.

References

1. Lee CJ. Commensuration bias in peer review. Philos Sci. 2015;82(5):1272-1283. doi:10.1086/683652

2. Kerr S, Tolliver J, Petree D. Manuscript characteristics which influence acceptance for management and social science journals. Acad Manage J. 1977;20(1):132-141. doi:10.5465/255467

3. Noothigattu R, Shah N, Procaccia A. Loss functions, axioms, and peer review. J Artif Intelligence Res. 2021;70:1481-1515. doi:10.1613/jair.1.12554

1University of Edinburgh, Edinburgh, Scotland; 2Carnegie Mellon University, Pittsburgh, PA, USA, nihars@cs.cmu.edu

Conflict of Interest Disclosures

None reported.

Funding/Support

This work was supported by the US National Science Foundation CAREER award 1942124, which supports research on the fundamentals of learning from people with applications to peer review.