Examination of Adapting the Patient-Centered Outcomes Research Institute’s Multistakeholder Application Review Processes During COVID-19

Abstract

Laura P. Forsythe,1 Robin Bloodworth,1,2 Carolyn Mohan,1 Rachel C. Hemphill,1 Esther Nolton,1 Ponta Abadi,1,3 Lisa Stewart,1,4 Krista Woodward1,5

Objective

The Patient-Centered Outcomes Research Institute’s (PCORI’s) review of research applications uniquely includes patient and stakeholder reviewers alongside scientists. PCORI switched to virtual panel discussions in response to the COVID-19 pandemic. The few prior studies examining virtual review for health research were mostly small scale, provided mixed results, and did not consider multistakeholder processes.1,2 This study examined how virtual panels compared with in-person panels on reviewer scores and experiences, whether differences between panels varied by reviewer type (scientist, patient, or stakeholder), and reviewer perceptions of challenges and benefits of virtual panels.

Design

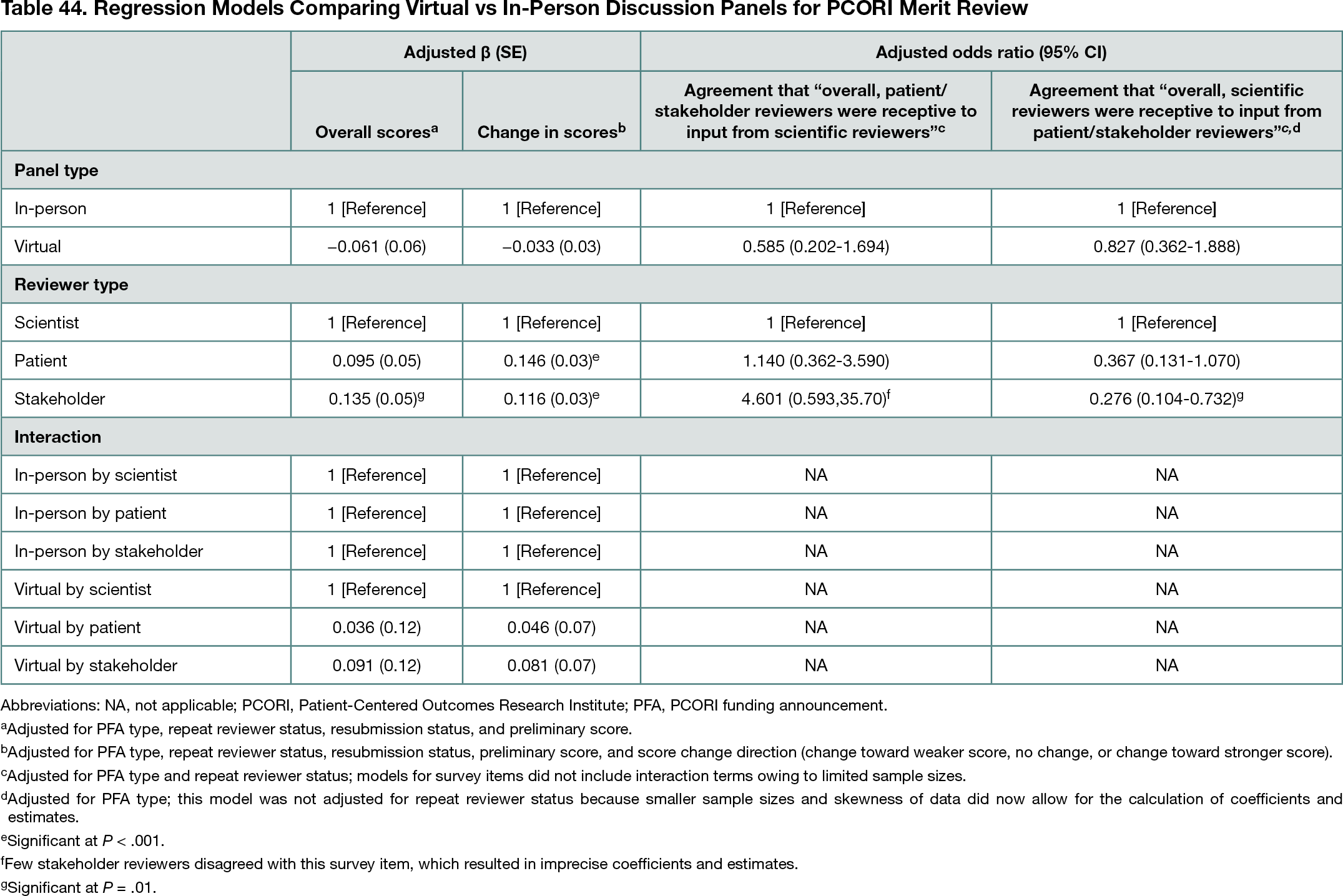

This cross-sectional, mixed-methods study analyzed data for PCORI funding opportunities before and after switching to virtual review, including review score data (8 in-person cycles and 4 virtual, 2017-2021) and closed and open-ended responses from anonymous online surveys of reviewers (1 in-person cycle [2017] and 2 virtual [2020-2021]). Virtual vs in-person panels were compared on (1) final overall review scores and changes in overall scores before and after panel discussion for primary reviewers using linear regression, which included examining the interaction of panel type and reviewer type; and (2) reviewer perspectives on giving and receiving input using logistic regression (5-point Likert agreement scales dichotomized as agree vs neutral/disagree). Regression models controlled for reviewer and application characteristics. Sensitivity analyses included multilevel models to account for hierarchy of reviewers and scores. For virtual cycles, open-ended survey responses were analyzed through inductive and deductive qualitative coding to identify themes regarding reviewers’ perceived challenges and benefits of virtual review.

Results

The analytic sample included 2897 reviews (2253 in-person, 644 virtual) and 388 survey responses (191 in-person, 197 virtual; 75%-83% response rate). Final review scores (mean [SD] score for in-person, 4.7 [1.67]; for virtual, 4.5 [1.67]) and absolute value of score changes (mean [SD] score for in-person, 0.8 [0.96]; for virtual, 0.7 [0.97]) were similar between virtual and in-person panels (P > .05 for all) (Table 44); there were no significant associations for interactions of panel type and reviewer type (P > .05). In closed-ended survey items, most reviewers agreed that reviewers of each type (patient or stakeholder and scientist) were receptive to input from the other type (85%-96% across reviewer types), and there were no differences in agreement by panel type (P > .05 for all) (Table 44). In open-ended survey responses, reviewers noted challenges of virtual panels, including disruptions to discussion quality and flow, missing social interactions among reviewers, and technical and logistical issues; reviewers also noted benefits of virtual panels, including convenience and lack of travel.

Conclusions

Findings indicate that, despite some challenges, virtual review panels were similar to in-person panels on review scores and key aspects of reviewer experiences in a multistakeholder process. Virtual panels could be further considered as a viable approach for PCORI in the future to offer flexibility in circumstances beyond the COVID-19 pandemic.

References

1. Carpenter AS, Sullivan JH, Deshmukh A,Glisson, SR, Gallo S. A retrospective analysis of the effect of discussion in teleconference and face-to-face scientific peer-review panels. BMJ Open. 2015;5:e009138. doi:10.1136/bmjopen-2015-009138

2. Gallo SA, Carpenter AS, Glisson SR. Teleconference versus face-to-face scientific peer review of grant application: effects on review outcomes. PLoS ONE. 2013;8(8):e71693. doi:10.1371/journal.pone.0071693

1Patient-Centered Outcomes Research Institute (PCORI), Washington, DC, USA, lforsythe@pcori.org; 2Abt Associates, Rockville, MD, USA; 3OCHIN Inc, Portland, OR, USA; 4Discovery USA, Chicago, IL, USA; 5Department of Population, Family, and Reproductive Health, Johns Hopkins Bloomberg School of Public Health, Baltimore, MD, USA

Conflict of Interest Disclosures

None reported.

Funding/Support

PCORI provided funding for this work.

Role of the Funder/Sponsor

PCORI staff planned and conducted all of the following aspects of this work: design and conduct of the study; collection, management, analysis, and interpretation of the data; preparation, review, or approval of the abstract; and decision to submit the abstract for presentation.

Acknowledgments

PCORI thanks everyone who has served as a Merit Reviewer for their time and invaluable input on research applications. The authors also thank Cary Scheiderer and Layla Lavasani (former PCORI staff) for sharing their insights about key elements of the review process and Sarah Cohen and Heidi Reichert of EpidStrategies for their consultation on statistical methods used in this abstract.