An Analysis of the History, Content, and Spin of Abstracts of COVID-19-Related Randomized Clinical Trials Posted as Preprints and Subsequently Published in Peer-Reviewed Journals or Unpublished

Abstract

Hannah Spungen,1 Jason Burton,2 Stephen Schenkel,3 David L. Schriger1

Objective

Preprint servers have gained traction in many academic fields. Most preprints are unlikely to adversely affect public health. However, during a pandemic, when there is an urgent need for data, preprints may cause harm by disseminating incomplete, incorrect, or misleading information.1,2 The aim of this study was to characterize and compare the characteristics, completeness, and spin of the abstracts of all randomized clinical trials (RCTs) related to COVID-19 posted to medRxiv from March 13, 2020, to December 31, 2021. An additional aim was to identify all corresponding published versions of these abstracts and perform a similar qualitative comparison to examine the impact of the peer review process.Design

An experienced librarian identified all COVID-19–related RCT preprints posted to medRxiv and all versions subsequently published in peer-reviewed journals as of June 1, 2022. An assistant created identically formatted Word documents of all abstracts. After training and the confirmation of adequate interrater reliability, 3 blinded reviewers scored individual abstracts presented in random order for completeness using items from CONSORT for Abstracts. They then evaluated blinded medRxiv/published abstract pairs for differences in content and spin, using criteria modified from Boutron et al.3 Last, the abstracts of all unpublished preprints, along with an equal-sized sample of subsequently published preprints and their published counterparts, were assessed for extent of spin. Analysis was descriptive.Results

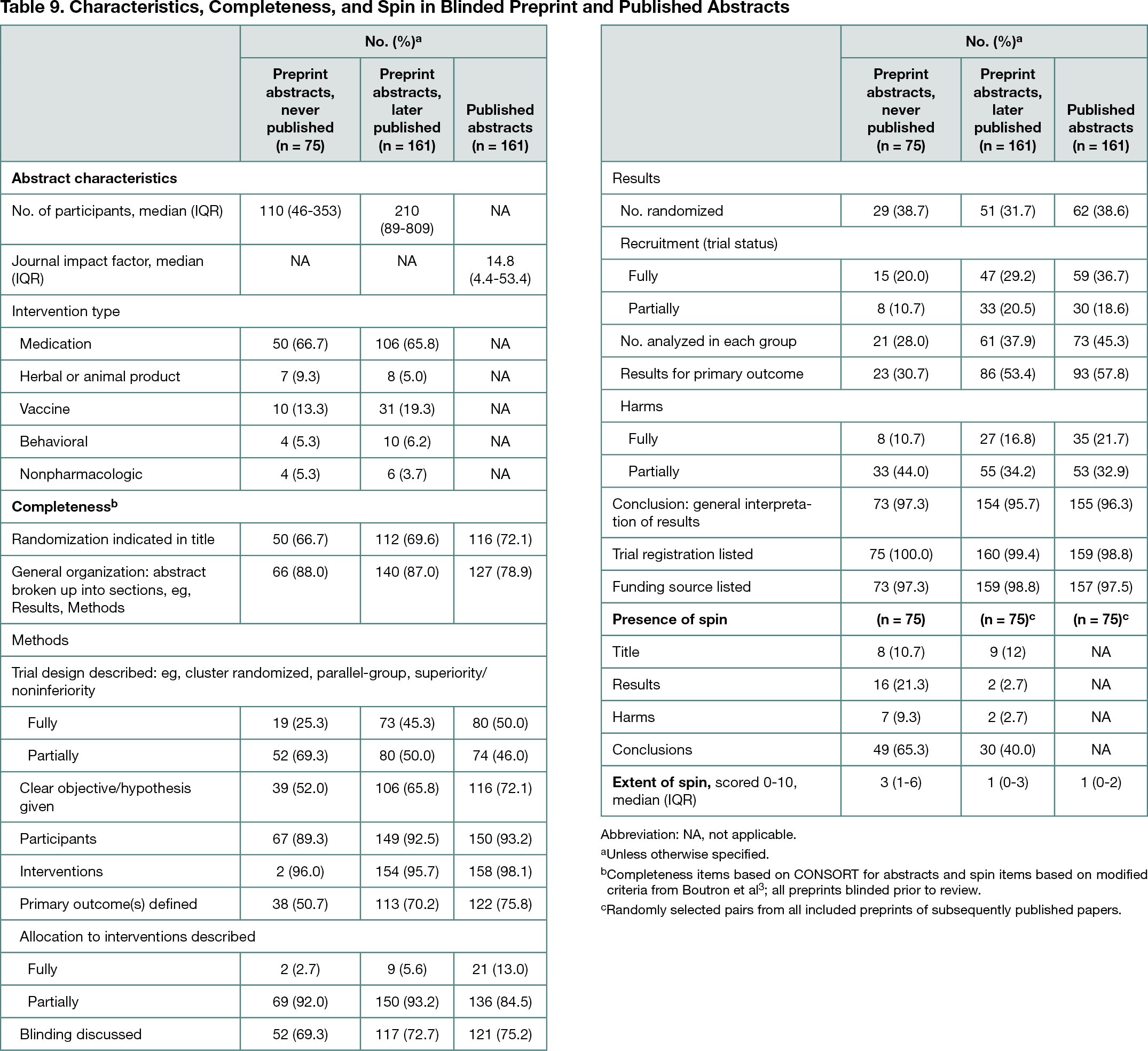

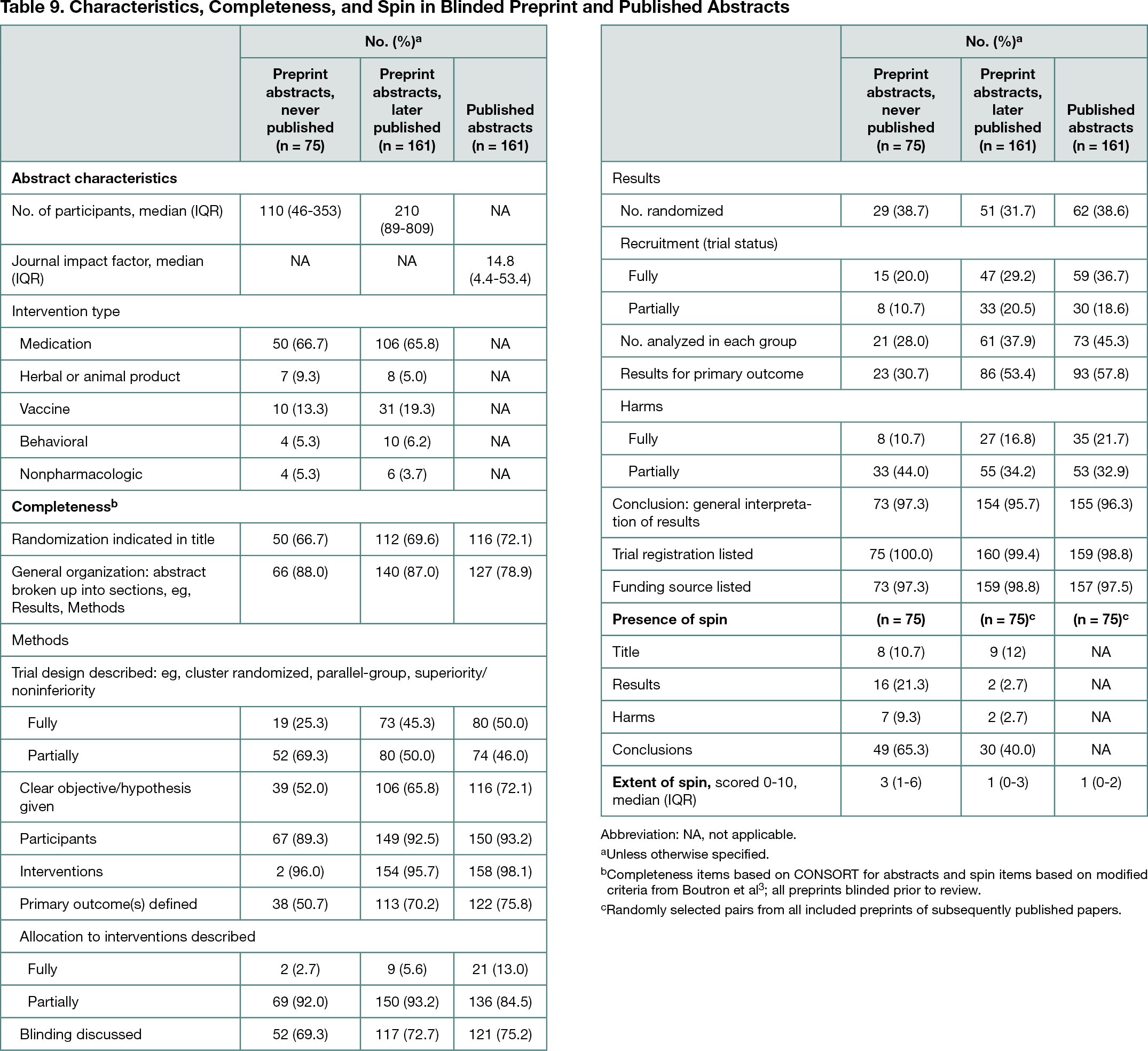

Two hundred ninety-one preprints were initially identified; 236 were confirmed as RCTs. Of these, 161 (68%) were found to have associated publications, which were published a median of 126 (IQR, 78-185; range, 0-654) days after medRxiv posting. The 75 unpublished preprints were posted a median of 344 (IQR, 235-429; range, 158-782) days prior to the final search. For most items, abstract completeness was higher in preprints that were subsequently published and was modestly higher still in published form (Table 9). The extent of spin was higher in unpublished preprints than in preprints that were subsequently published (Table 9). Last, of 161 published-preprint abstract pairs studied, 25% had more spin in the preprint version, 8% had more spin in the published version, and 66% had no difference in spin. Conversely, 12% had more consensible preprints, 42% had more consensible published versions, and 45% had no difference.