Use of an AI Peer Review Panel to Assess Manuscript Clarity, Novelty, and Impact

Abstract

Pawin Taechoyotin,1 Daniel E. Acuna1

Objective

Nowadays, there is an excessive load on human reviewers to the point where the quality of peer reviews is often compromised. To alleviate this excessive load, we explored the possibility of a multiagent AI peer review panel that we have developed that considers text, figures, and citations to produce peer reviews. This study is based on the advancement of large language models1,2 and the study of AI agents for peer review,3 which so far only utilize the textual content within the manuscript.

Design

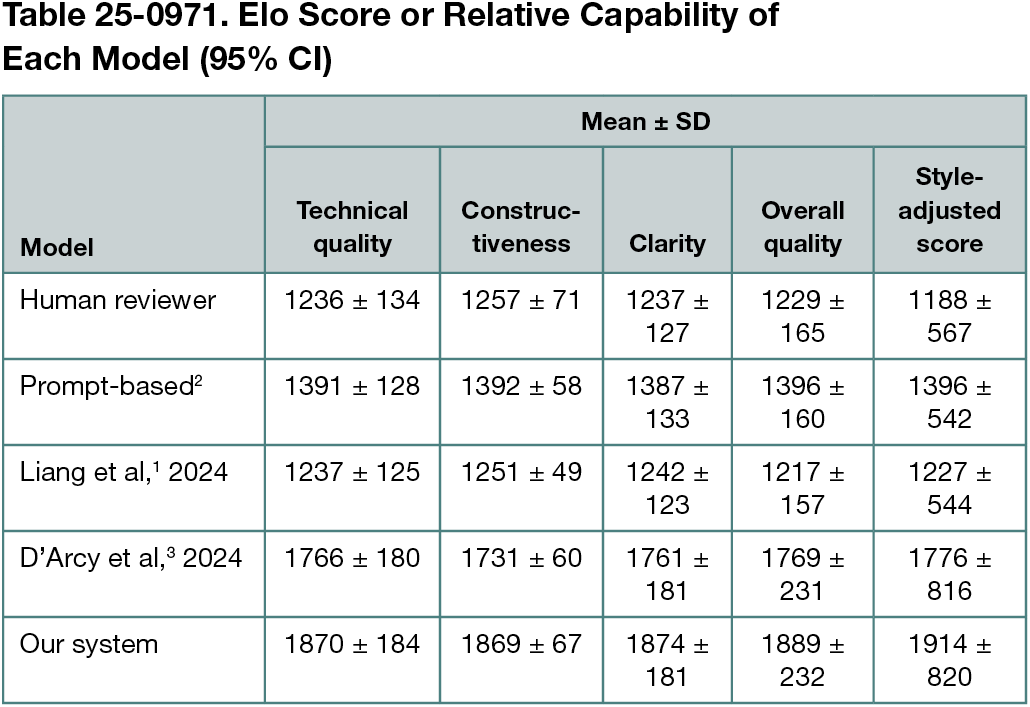

The AI panel consisted of a leader agent, an experiment agent, an impact agent, and a clarity agent. Each agent utilized zero-shot prompting to the LLM model Claude Sonnet 3.5. Prompt engineering was used to craft the system and task prompt. The system prompts guided the agents to assume their specific role, and the task prompts provided clear instructions on the tasks to perform. Novelty was assessed by comparing the manuscript with similar articles from an external database of published literature. The manuscript, novelty assessment, and figures were sent to each agent for analysis, and the leader agent compiled the information from all agents, requested clarifications if needed, and produced the final review. Our AI multiagent panel was evaluated and compared with human reviews as well as simple prompt-based review2 and findings of 2 previous studies.1,3 Evaluation was done by graduate students via an arena where pairs of systems were selected based on their Elo score. The Elo score is a measure of the relative capability of each system based on their win/loss in the arena against other systems and was calculated using the Bradley-Terry model. The system names were hidden from the participants to reduce bias. The participants evaluated the reviews based on technical quality, constructiveness, clarity, and overall assessment before making their choice.

Results

Eleven graduate students in the computer science department participated in this study, which resulted in 140 matches between pairs of systems. The systems with similar Elo scores were most likely to be paired together. Mean Elo scores of the reviews produced from our system were higher than those of human review scores (Table 25-0971). Further analysis showed that reviews produced by our system included more detailed assessment of the limitations and possible improvements of the manuscript than reviews produced by humans. However, our system tended to overly praise work, which humans rarely do.

Conclusions

Our AI peer review system demonstrated potential in aiding the peer review process of publications as an addition to human reviews. It might act as a quick response to authors while human reviewers are reviewing the manuscript. Although there might be bias within the review, the author can choose to verify and utilize comments from this quick feedback as needed. Harm or the potential for negative effects were not studied. We acknowledge that the number of participants in this study was small. Thus, a larger study might have different results from our findings.

References

1. Liang W, Zhang Y, Cao H, et al. Can large language models provide useful feedback on research papers—a large-scale empirical analysis. NEJM AI. 2024;1(8):AIoa2400196. doi:10.1056/AIoa2400196

2. Wang H, Fu T, Du Y, et al. Scientific discovery in the age of artificial intelligence. Nature. 2023;620(7972):47-60. doi:10.1038/s41586-023-06221-2

3. D’Arcy M, Hope T, Birnbaum L, Downey D. Marg: multiagent review generation for scientific papers. arXiv. Preprint posted online January 8, 2024. doi:10.48550/arXiv.2401.04259

1Science of Science and Computational Discovery Lab, Department of Computer Science, University of Colorado Boulder, Boulder, CO, US, daniel.acuna@colorado.edu.

Conflict of Interest Disclosures

Daniel E. Acuna reported being the founder of ReviewerZero AI LLC (https://www.reviewerzero.ai), a company focused on research integrity solutions, which may be relevant to the topic of this publication.