Reviewers’ Interpretation and Application of Research Quality Criteria in Grant Peer Review

Abstract

Rachel Claus1

Objective

Research quality criteria guide grant applications and reviewer evaluations. When research crosses disciplinary boundaries, it requires more expansive quality criteria. Research evaluation is challenging in this context because concepts of research quality are rooted in disciplinary tradition.1 Reviews are characterized by inconsistency and low agreement2 and exacerbated by poorly defined criteria.3 This presentation focuses on how consistently quality criteria are applied and interpreted by reviewers and explores reasons for inconsistency.

Design

All scoring data were collected from 2 noncompetitive review processes of multimillion-dollar research program proposals with aims to improve food security. In 2021, 32 research proposals were evaluated using 17 criteria on a standard 4-point Likert scale (0-3), with limited reviewer overlap. In 2024, 9 proposals were evaluated using 12 criteria, and 4 using 11 criteria, with no reviewer overlap. Each proposal was assessed by a panel of 3 reviewers who scored independently before discussing scores to reach consensus. In total, 45 proposals were evaluated by 80 individual subject matter experts across 45 panels. Score consistency was measured by discrepancy per criterion as the difference between the highest and lowest score awarded in a panel, and how many reviewers agreed on their individual assessments. The standard of consistency was met when at least 2 of 3 reviewers agreed, and the discrepancy between individual reviewer scores was less than or equal to 1 Likert scale point. A total of 696 panel-level measures of consistency were computed. Cross tabulations were used to identify frequencies of inconsistency by criterion. Interviews with reviewers were conducted to understand perceived reasons for individual review discrepancies and disagreement, and individual and panel-level score justifications were analyzed to explore criteria interpretations.

Results

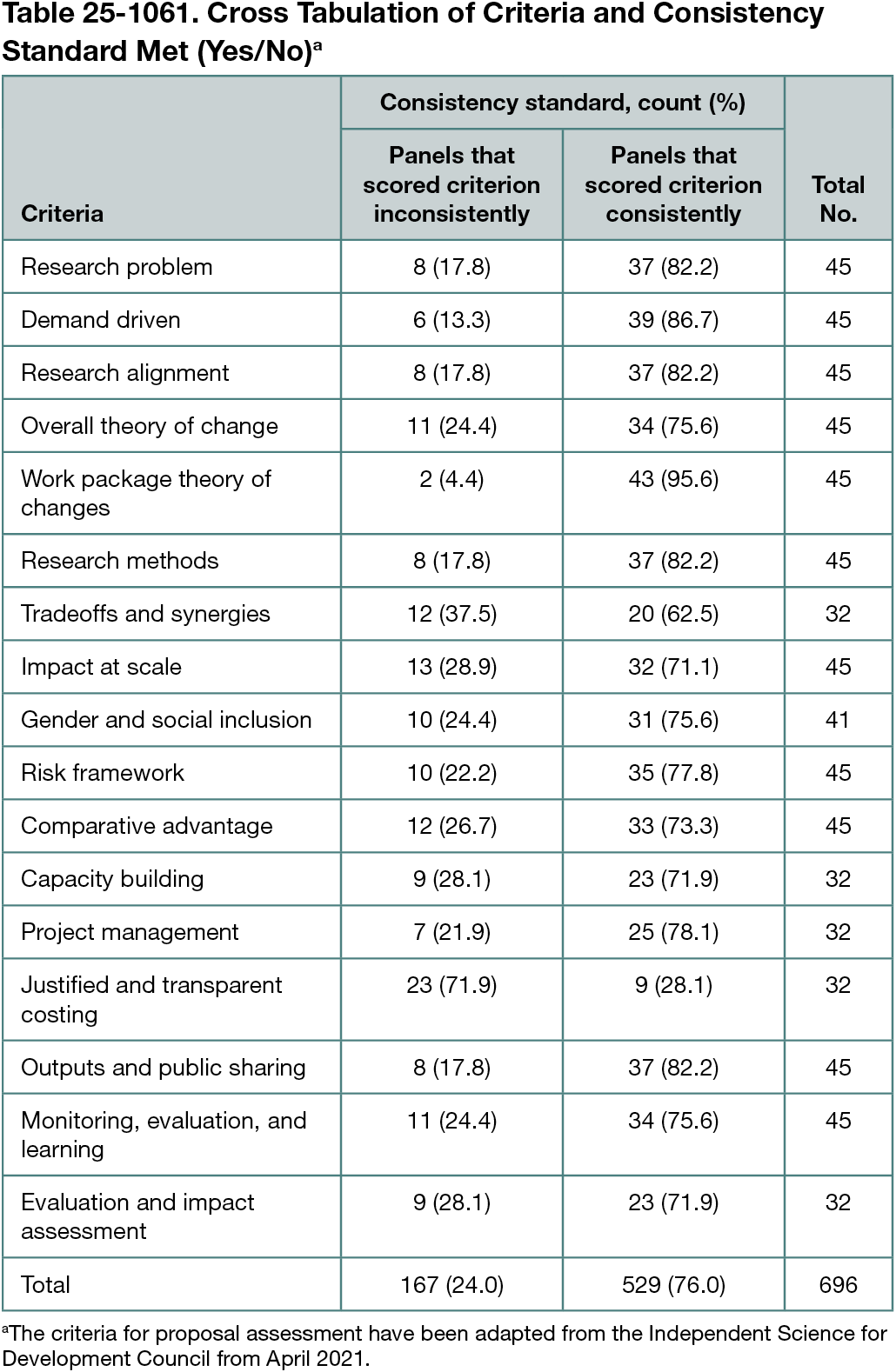

There was a statistically significant relationship between consistency and the evaluation criteria (χ² = 61.013; P < .001). Some criteria were more consistently applied than others. The frequency that each criterion met the standard of consistency is presented in Table 25-1061. Reviewers more frequently applied the following criteria inconsistently when evaluating proposals: comparative advantage (26.7%), monitoring, evaluation, and learning (24.4%), and overall theory of change (24.4%). Justified and transparent costing had the highest rate (71.9%) of inconsistency in 2021 but was not evaluated in the 2024 review cycle. Individual score discrepancies and disagreement were perceived by reviewers to result from diverse disciplinary expertise and a constructive way to achieve comprehensive quality assessments. More problematic reasons for discrepancies included misalignment of criteria to the application and different interpretations resulting from individual values and perceived abilities to make judgments.

Conclusions

The preliminary results indicate scope for criteria clarification to improve consistency in interpretation and reliability of individual reviewer’s quality assessments of proposals. The approach can be adapted to test and inform improvements to quality criteria.

References

1. Defila R, Di Giulio A. Transdisciplinary development of quality criteria for transdisciplinary research. In: Regeer BJ, Klaassen P, Broerse JEW, eds. Transdisciplinarity for Transformation. Palgrave Macmillan, Cham; 2024. https://doi.org/10.1007/978-3-031-60974-9_5

2. Pier EL, Brauer M, Filut A, et al. Low agreement among reviewers evaluating the same NIH grant applications. Proc Natl Acad Sci U S A. 2018;115(12):2952-2957. doi:10.1073/pnas.1714379115

3. Abdoul H, Perrey C, Amiel P, et al. Peer review of grant applications: criteria used and qualitative study of reviewer practices. PLoS One. 2012;7(9):e46054. doi:10.1371/journal.pone.0046054

1Royal Roads University, Victoria, BC, Canada, rachel.claus@royalroads.ca.

Conflict of Interest Disclosures

None reported.

Funding/Support

This research was supported by the Sustainability Research Effectiveness Program, the BC Graduate Scholarship, the Royal Roads Doctoral Scholarship, and the David Harris Flaherty Scholarship.

Role of the Funder/Sponsor

The research was done as part of the Sustainability Research Effectiveness Program, with input provided to the design of the study, review and approval of the abstract and input to the decision to submit the abstract for presentation. Scholarships provided general support without any role in design and conduct of the study; collection, management and interpretation of the data; preparation, review or approval of the abstract, and decision to submit the abstract for presentation.